Working with startups in the Meta Llama Incubator and SMEs across Southeast Asia trying to implement AI in their workflows taught me something important about AI risk that most people misunderstand in the last few years, and I can tell you exactly why most of them are DOA: they treat AI like a faster version of Google.

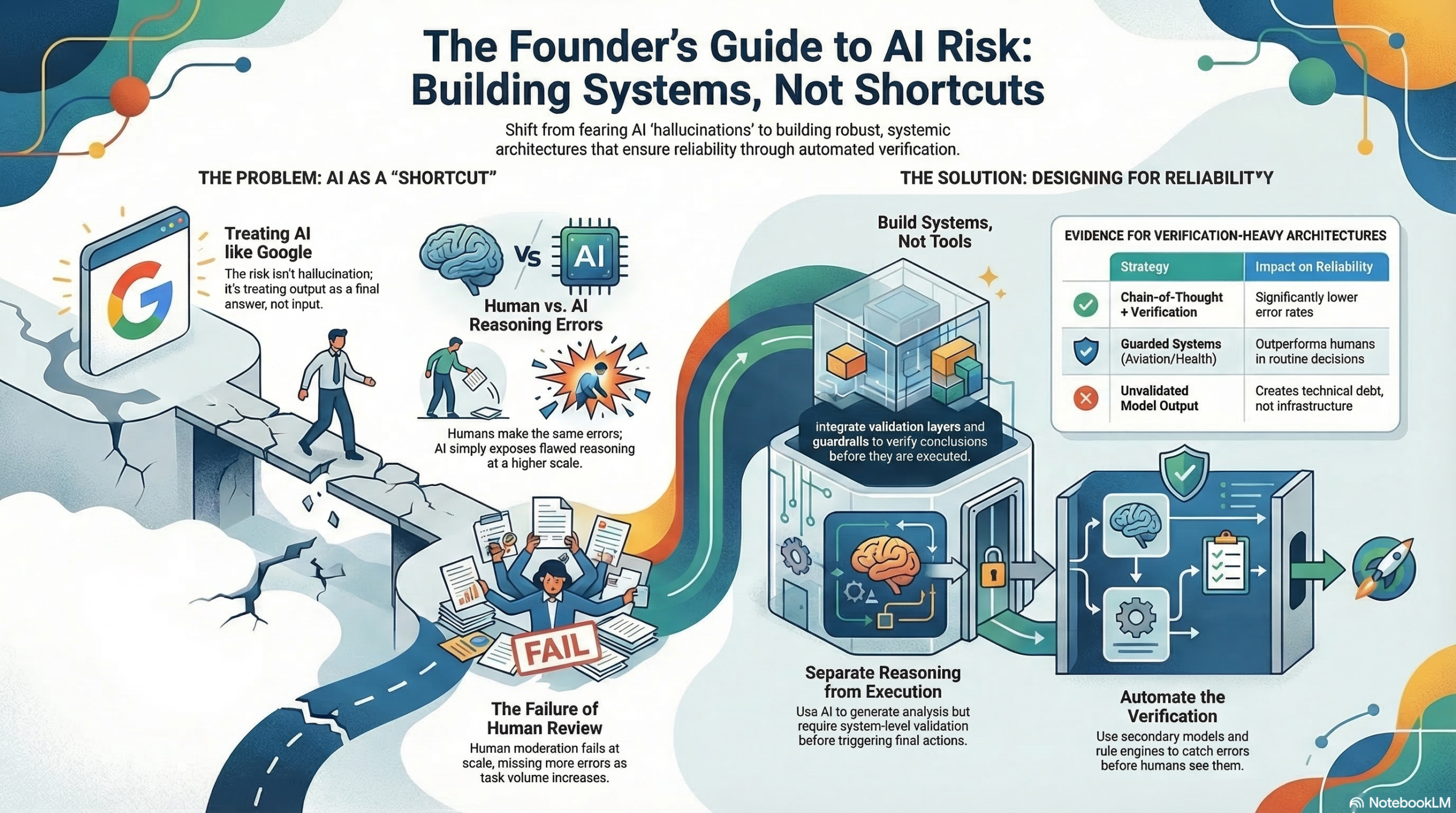

Founders are paralyzed by the fear of “hallucinations,” yet they are missing the much larger architectural disaster right in front of them. The fundamental mistake isn’t a technical glitch; it’s a conceptual failure. You are using a reasoning engine as if it were a search engine. If you treat AI as a source of truth rather than a system of logic, you haven’t built a product, you’ve built a liability.

AI is a Reasoning Input, Not a Final Answer

AI does not invent errors out of thin air; it simply scales the ones you already have. After analyzing thousands of pitches, I’ve watched founders reject automated workflows because “AI can’t be trusted,” only to watch those same founders make the exact same judgment errors manually, confirmation bias, recency bias, and anchoring on the first number they see.

The difference is that AI exposes this flawed reasoning at a speed and scale that humans can’t match. We hold AI to a standard of perfection we don’t even apply to our own VPs. To win, you have to stop looking at the output as a finished product.

“The issue isn’t that AI hallucinations. The issue is you’re treating its output as a final answer instead of a decision input.”

Stop Building AI Tools; Start Building AI Systems

In hundreds of the applications I reviewed, the “AI Strategy” was nothing more than a prompt box, a tool, not a system. A tool is when you give a model a task and pray for a correct result. A system is an architecture of validation layers, outcome checks, and guardrails that test whether a conclusion makes sense before it ever touches a customer.

Look at the aviation industry. Planes don’t stay in the air just because a pilot is sitting in the cockpit; they stay up because the entire system is designed to verify decisions automatically. When you deploy AI as a standalone tool without these validation layers, you aren’t building infrastructure, you are building technical debt that will eventually bankrupt your operational efficiency.

Automated Verification Outperforms the “Human-in-the-Loop”

Founders often cling to “human-in-the-loop” as a comfort blanket, but let’s be clear: human review is a scalability trap. My observations across use cases confirm that humans are the ultimate bottleneck. Worse, moderation studies show that we actually become less reliable at high volumes; we miss errors because we get complacent.

If you want to scale, you need a “Risk Architecture” that combines internal and external verification:

- Internal Verification: Use Chain-of-Thought (CoT) reasoning. Research from OpenAI and Anthropic proves that forcing a model to “show its work” before providing an answer significantly drops error rates.

- External Verification: This is your secondary line of defense. Use Secondary Models to audit the first, Rule Engines to catch impossible data points, and Logic Checks that act as hard-coded kill switches.

Automated systems in high-stakes fields like medicine and aviation already outperform humans in routine decisions when these guardrails exist. If you’re still relying on a human to “double-check” every AI output, you don’t have an automated business; you have a very expensive manual one.

Separate Reasoning from Execution

The most successful founders I advise follow a strict “Fraud Detection” model: they decouple reasoning from execution.

AI should be used to generate analysis, identify patterns, or weigh options. However, the actual execution—sending that high-stakes email, approving a five-figure payment, or modifying a production database—must require a separate validation trigger. This distinction is non-negotiable for anyone building in fintech or healthtech. AI flags the transaction; the system (or a human-gated rule) executes it.

“The founders who win with AI won’t be the ones who avoid it. They’ll be the ones who design it like a system, not a shortcut.”

From Productivity to Decision Quality

Stop tracking how much “faster” your team is moving. Speed is irrelevant if you’re heading toward a cliff. The shift you must make is from tracking “productivity” to tracking “decision quality.”

Start measuring your error rates, your correction rates, and the downstream impact of every AI-driven choice. Stress-test your workflows by running adversarial cases—try to break your system on purpose before the market does it for you. Finally, assign clear human accountability; someone must own the outcome of every automated process.

The winners in the AI race will be defined by the strength of their risk architecture, not the size of their context window. If you’re pitching investors and your AI strategy is “we use GPT-4,” you aren’t ready for the big leagues. You’re just another wrapper waiting to be unwrapped.

Are you building a shortcut that creates debt, or a system that scales intelligence?